Multi-Objective Optimization

- identify non-dominated individuals (individuals, for which in the multi-objective, is not dominated); this forms the “pareto frontier”

- create all combinations of input parameters, and create a pareto frontier for them

- identify a weighting between the variations you desire, and identify the elements which align with the Pareto frontier

Pareto Optimiality

Pareto Frontier

A Pareto frontier is the entire set of pareto optimal points—i. the set that’s not dominated.

Solving for Pareto Frontier

Constraint Method

We can convert this into a single-objective optimization problem; first, sort the constraints by order of importance:

\begin{align} \min_{x}&\ f_{1}(x) \\ s.t.&\ f_2(x) \leq c_2 \\ &\ f_3(x) \leq c_3 \\ &\ \dots \end{align}

we can set \(c_{j}\) to calibrate which point we want on our Pareto Frontier. By setting \(c_{j}\) large, we identify that we don’t care about that constraint as much; as we track \(c_{j}\) small, we start tracing out the Frontier along the \(j\) th direction. At some point, as you lower \(c_{j}\), we will run out of points because we would have left the criterion space.

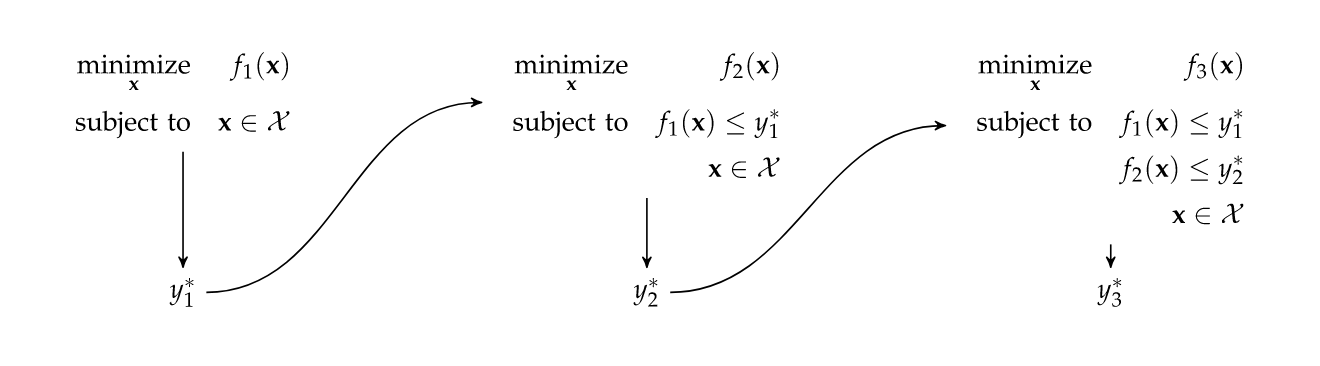

Lexicographic Method

Iteratively perform optimization; again sort constraints in order of importance, then:

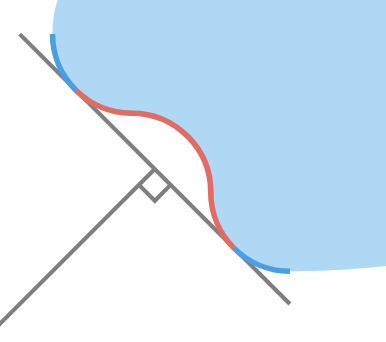

Weight Method

you can use a linear weight to obtain some Pareto optimal answers:

\begin{equation} f = w^{\top}\mqty[f_1 \\ \dots\\f_{N}] \end{equation}

this fits a line against the Pareto region, which will miss some points but will give some Pareto optimal answers—there will not be any weighting which preserves points in the zone.

Goal Programming

\begin{align} \min_{x \in \mathcal{X}} \mid f(x) - y^{goal} \mid_{p} \end{align}

just minimize some distance (L1, L2, L-inf norms are all good) to a “good” point, usually the Utopia Point.

L1 norms have the same problem as Weight Method as it is a line.

Utopia Point

The Utopia Point is the most optimal point, component wise.

Multi-Objective Population Method

Classic population method

- create \(m\) subpopulations

- optimize each population for one objective

- shuffle them together after each cohort’s optimization, create crossovers and mutations

This method is good to get the pareto frontier, but often we get clumping at the extremas of each objective. Often, we try to encourage dispersion.

Non-Dominating Ranking

You can rank all points (including those not on the Pareto Frontier), with

- pareto-frontier

- non-dominated except for 1)

- non-dominated except 1) or 2)

- …

Pareto filter

Identify points on the Pareto Frontier, and keep top k, perhaps with dispersion

Niche Technique

a niche disperses the design along the Pareto Frontier

- fitness sharing: penalize if neighbors are too close)

- equivalence class sharing: if two individuals are compared, their non-dominating ranks are compared first, then fitness sharing is used as a tie breaker