Bessel's Equation

Last edited: August 8, 2025\begin{equation} x^{2}y’’ + xy’ + (x^{2}-n^{2})y = 0 \end{equation}

this function is very useful, they have no well defined elementary result.

best-action worst-state

Last edited: August 8, 2025best-action worst-state is a lower bound for alpha vectors:

\begin{equation} r_{baws} = \max_{a} \sum_{k=1}^{\infty} \gamma^{k-1} \min_{s}R(s,a) \end{equation}

The alpha vector corresponding to this system would be the same \(r_{baws}\) at each slot.

which should give us the highest possible reward possible given we always pick the most optimal actions while being stuck in the worst state

BetaZero

Last edited: August 8, 2025Background

recall AlphaZero

- Selection (UCB 1, or DTW, etc.)

- Expansion (generate possible belief notes)

- Simulation (if its a brand new node, Rollout, etc.)

- Backpropegation (backpropegate your values up)

Key Idea

Remove the need for heuristics for MCTS—removing inductive bias

Approach

We keep the ol’ neural network:

\begin{equation} f_{\theta}(b_{t}) = (p_{t}, v_{t}) \end{equation}

Policy Evaluation

Do \(n\) episodes of MCTS, then use cross entropy to improve \(f\)

Ground truth policy

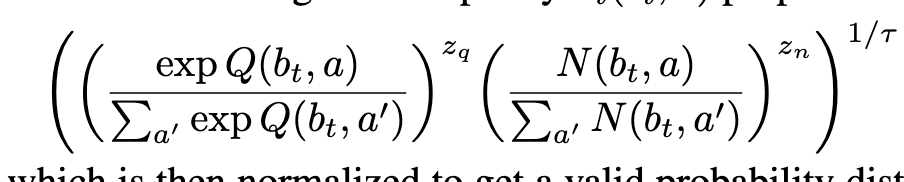

Action Selection

Beyond Worst-Case Analysis

Last edited: August 8, 2025There is no polynomial time algorithm \(A\) such that for all 3CNF \(\varphi\), \(A\qty(\varphi)\) accepts if and only if \(\varphi\) is satisfiable.

How do we relax this?

- we can say “for most” 3CNF formulas, which means that we have to name a distribution

- we can say to satisfy “most” \(\varphi\), meaning we can satisfy most clauses of \(\varphi\)

- allow more than poly-time (SoTA is at \(1.34\dots^{n}\)).

PCP Theorem

\(P \neq NP\) implies no polynomial time algorithm that finds \(x\) that satisfies \(99.9\%\) of clauses. In particular, no polytime algorithm that finds \(x\) that satisfies \(\geq \frac{7}{8} + \varepsilon\) fraction of the clauses.

Big Data

Last edited: August 8, 2025Big Data is a term for datasets large enough that traditional data processing applications are inadequate. i.e. when non-parallel processing is inadequate.

That is: “Big Data” is when Pandas and SQL is inadequate. To handle big data, its very difficult to sequentially go through and process stuff. To make it work, you usually have to perform parallel processing under the hood.

Rules of Thumb of Datasets

- 1000 Genomes (AWS, 260TB)

- CommonCraw - the entire web (On PSC! 300-800 TB)

- GDELT - https://www.gdeltproject.org/ a dataset that contains everything that’s happening in the world right now in terms of news (small!! 2.5 TB per year; however, there is a LOT of fields: 250 Million fields)

Evolution of Big Data

Good Ol’ SQL

- schemas are too set in stone (“not a fit for Agile development” — a research scientist)

- SQL sharding, when working correctly, is

KV Stores

And this is why we gave up and made Redis (or Amazon DynamoDB, Riak, Memcached) which keeps only Key/Value information. We just make the key really really complicated to support structures: GET cart:joe:15~4...